A chief executive is currently presenting an AI strategy to the board in a glass-walled conference room somewhere in the City of London. The slides have a nice appearance. The figures are encouraging. The organization appears to be ahead of the curve based on the pilot results, which were obviously cherry-picked. The fact that 93% of that executive’s AI-informed decisions over the previous year were predicated on data that proved to be erroneous is something the slides fail to highlight. This is unknown to everyone in the room. It’s possible that the executive is also unaware of it.

This is the silent crisis at the core of the adoption of AI in enterprises. Not a breakdown in technology. Not a lack of funding. It’s more awkward: those who have the most power to make decisions about AI are, by a number of metrics, the least qualified to do so safely and the most certain that everything is going well.

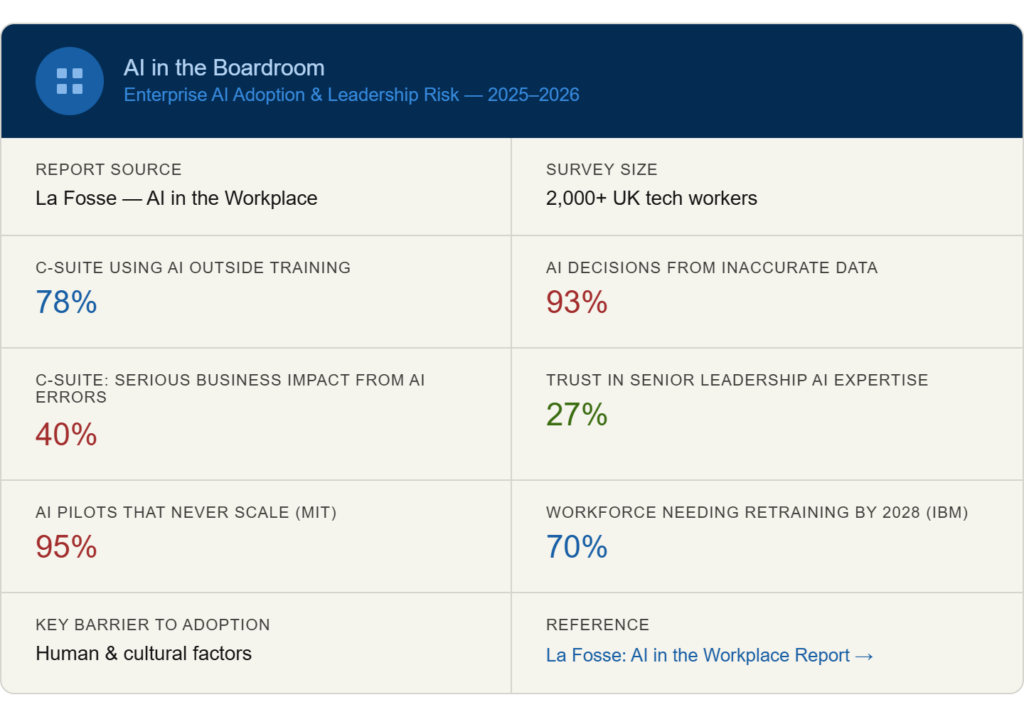

According to a 2025 survey conducted by transformation specialist La Fosse among over 2,000 UK tech workers, 78% of C-suite executives acknowledge using AI for tasks for which they lack the necessary training. 93% of respondents admit to using erroneous data to inform AI-informed decisions. According to four out of ten, those choices had detrimental effects on business. However, seven out of ten say they are “very confident” in their ability to use AI. Depending on your point of view, the difference between those two things is either remarkable or completely predictable.

It’s possible that part of the issue is confidence. AI tools tend to reinforce the strong instincts that senior leaders have developed over decades; they react fast, project authority, and seldom resist. A technology that drafts your emails and verifies your priors has an alluring quality. It does not, at least not consistently, tell you when something is incorrect.

As all of this is happening, the workforce is dubious. Just 27% of intermediate-level workers claim to have faith in the AI knowledge of their senior leadership. It’s not a rounding error. Every time a board-level decision is linked to an AI output that no one thought to check, the structural credibility gap quietly grows. 65% of C-suite members admit that these choices are made at the highest levels, indicating that the issue is more tolerated than invisible.

The organizational pressure that surrounds it contributes to its complexity. The atmosphere is aptly captured by research conducted by The Positive Group, which spoke with 35 senior executives in the fields of professional services, financial services, aviation, and life sciences. A chief technology officer talked about managing three conversations about AI at the same time: employees who are genuinely the most considerate about what the technology can and cannot do, executive committees overcome with FOMO, and shareholders who want to hear the word “AI” and nothing else.

“Moving between those conversations,” the chief technology officer stated, “is one of the hardest parts of leading this work.”

There is genuine pressure to come across as decisive. Boards desire advancement. Announcements are what investors want. One senior executive at a multinational retail company described the outcome as “leadership FOMO — everyone wants an update on AI before they understand the problem.”This dynamic results in what some researchers now refer to as “AI theatre,” which includes press releases about transformation, polished prototypes, and innovation showcases. It takes time for real operational change to occur. Sometimes it doesn’t show up at all.

According to MIT research, 95% of AI pilots never advance past the experimental phase. According to Boston Consulting Group, even after two years of work, 74% of businesses are unable to derive significant value from AI. These figures have been around long enough that nobody is shocked by them anymore, which could be a sign of the issue in and of itself. Failure has become a standard expectation for organizations.

The underlying behavioral pattern is more difficult to understand. Compared to 35% of intermediate employees, nearly 75% of C-suite executives acknowledge uploading private company data into AI tools. According to the La Fosse research, senior leaders combine weaker oversight mechanisms with increased autonomy, time pressure, and consequential decision-making authority. There is an uneven distribution of risk. At the top, it focuses.

Satya Nadella, who has witnessed companies struggle with this issue for years, has been straightforward about it: the effort isn’t in developing the technology, but rather in altering the culture surrounding its adoption. The companies that appear to be making progress, such as H&M redefining AI as “amplified intelligence,” Morgan Stanley limiting its GPT-powered adviser tool to verified internal research prior to its widespread implementation, and Airbus investing in AI literacy across 130,000 employees, all have something in common with the laggards. Adoption was not viewed as a product launch, but rather as a cultural issue.

It seems like a lot of organizations are still waiting for this to work itself out. The governance issues will be simpler with improved models. that workers won’t need guidance to adapt. Competence will eventually follow from that confidence at the top. Whether any of that is accurate is still up for debate. The data consistently indicates that the gap between senior leaders’ perceptions of their AI capabilities and those of the general public is not narrowing on its own. There must be a purposeful change.

Anyone who pays close attention can see the irony: the executives who are pushing for the adoption of AI—those who sign budgets, hire consultants, and announce strategies—are also the building’s highest-risk users. Realizing that may be the first open discussion the boardroom has had.